Emily Howell is an interactive interface that allows both musical and language communication, exploring how a software can be an artist, a musician in particular. A domain previously reserved to humans, is now slowly entered by computers and lines of code.

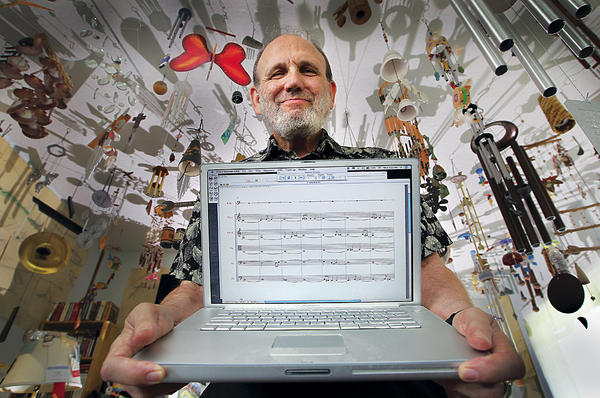

Programmed by David Cope, professor of music at UCSC, Emily Howell has released two albums. She composes and performs her own pieces of music and can adapt itself to the preferences of the listener.

"The program produces something and I say yes or no, and it puts weights on various aspects in order to create that particular version," says Cope. "I've taught the program what my musical tastes are, but it's not music in the style of any of the styles - it's Emily's own style".

Computers do not need to rest, they can compose music 24/7. Next to that, they don't need to practice and they are not bound by physical motor skill to synthesize sound. Emily Howell introduces us to a new style of music where software composes musical pieces that evokes feelings to those who hear it.

Will artificial intelligence proof to be the greatest asset to musicians or will it eventually perform the art in a way superior to us?

Story via Arstechnica. Image via UCSC

Share your thoughts and join the technology debate!

Be the first to comment