Let’s say an old schoolmate, whom you haven't spoken to in ages but is your friend on Facebook, posts a status update that sounds like a suicide note. What to do? Message the friend? Then what to say? Facebook is setting up a tool that advises what to do and directly seeks contact with the person who might be on the brinck of committing suicide. Is a social network morally obliged to have such a feature or is it trespassing privacy borders? This is a sensitive topic.

The company said it’s been working on its suicide prevention tool for almost ten years. After a number of suicides in Palo Aalto, California, the hometown of Facebook founder Mark Zuckerberg, it was clear the problem was urgent. Palo Aalto has a particularly high suicide rating, which is four to five times higher than the national average. Moreover, now that the suicide rate in the US has risen to 30-year high Facebook is implementing the tool for all its users. “We’re losing more people to suicide than breast cancer, car accidents or homicides” said Dr. Dan Reidenberg, the executive director of Save.org.

So, what does the tool provide? Essentially it’s a drop-down menu that allows you to flag a message as a content that could be about suicide or self-harm. Consequently, experts employed by Facebook will review the message. The person reporting the suicide note is given a list of options, including sending a Facebook message to the friend in distress. Facebook suggests a pre-written text, but you can also fill in your own words. “People really want to help, but often they just don’t know what to say, what to do or how to help their friends” said Vanessa Callison-Burch, Facebook product manager working on the project.

Users also see a list of resources, such as help lines and suicide prevention material. This informs people on how to respond, so their help is not just well-intended but actually effective.

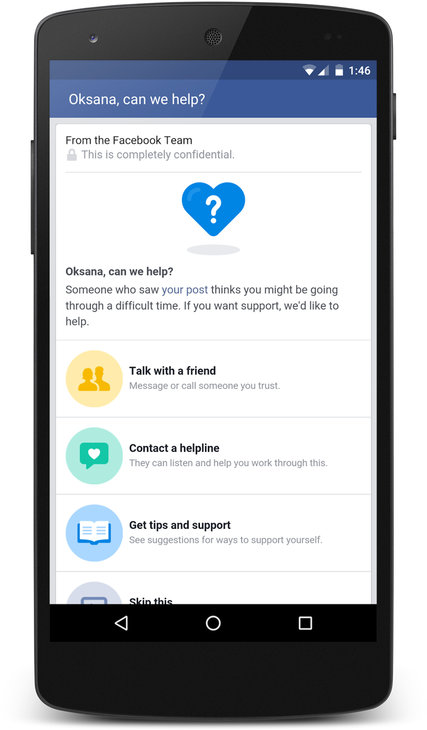

A screenshot showing an example of Facebook’s suicide prevention efforts.

A screenshot showing an example of Facebook’s suicide prevention efforts.

However, the tool is not solely for the ‘flagging’ people, but also for the users who are flagged. If Facebook evaluators have reason to believe that somebody is calling for help, then the next time this person is logging in, he or she will be shown a similar list of options with indications on what to do. They are also advised to reach out to friends who may be able to support them.

Facebook has such a vast reach, as it is rooted into the social fabric of millions of people, that this tool might be truly useful. On the flip side, however, it’s arguable that the power of Facebook to influence and bias our behavior is growing to a worrisome size. “The company really has to walk a fine line here” said Dr. Jennifer Stuber, associate professor at the University of Washington and the faculty director of Forefront, a suicide prevention organization. “They don’t want to be perceived as ‘Big Brother-ish,’ because people are not expecting Facebook to be monitoring their posts”.

Source: New York Times. Image: Shutterstock

Share your thoughts and join the technology debate!

Be the first to comment